Hey hey,

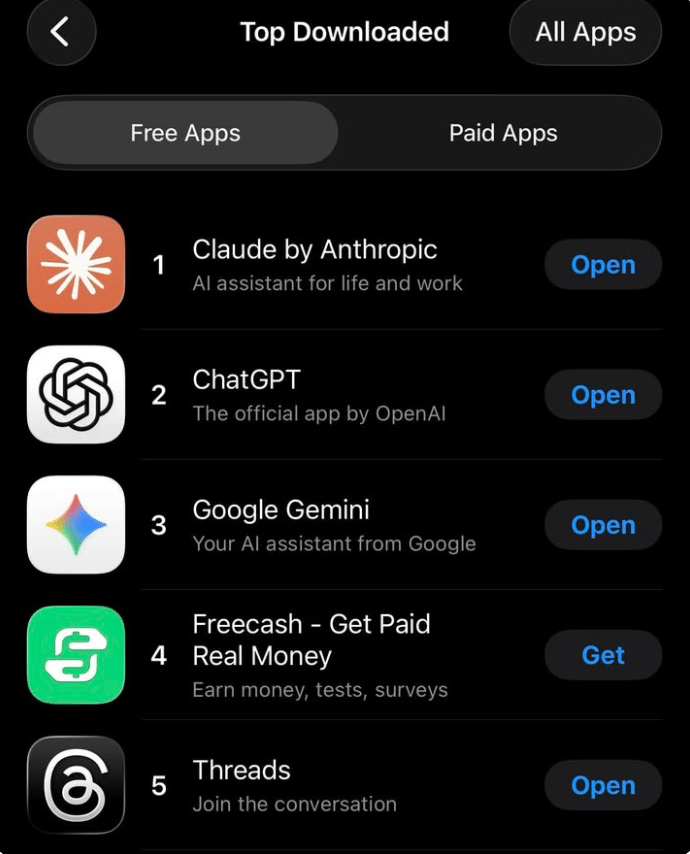

On March 1, 2026, Claude (Anthropic's AI assistant) hit number one on Apple's App Store. It overtook ChatGPT. The app that had dominated the category for over a year. Gone. Just like that.

Two days earlier, Claude's parent company had lost a $200 million Pentagon contract. Got banned from every federal agency. Was declared a "supply-chain risk to national security" — the first American company to ever receive that label.

And their biggest competitor signed a Pentagon deal the same day. By every traditional measure, Anthropic should have been finished. Instead, they had the biggest week in the company's history.

This is the story of how saying no to $200 million turned out to be the smartest product decision in AI.

The Underdog

Anthropic is not the company most people think of when they think of AI. That is OpenAI. Sam Altman. ChatGPT. The company is backed by Microsoft with over $13 billion in investment. The company has 900 million weekly users and is the most recognisable AI brand on the planet.

Anthropic was founded in 2021 by Dario and Daniela Amodei, siblings who left OpenAI because they believed safety was not being taken seriously enough. They took a handful of key researchers with them. They started smaller. They moved more slowly.

They built Claude.

By early 2026, Claude was good. Really good. Opus 4.5, released in late 2025, had topped benchmarks in coding, reasoning, and analysis. But in market share, Anthropic was still the underdog. ChatGPT was the default.

Google's Gemini had the distribution advantage of being baked into every Android device and Google search. Anthropic had one thing neither competitor could match: a reputation for taking safety seriously. And they had just made a bet that this reputation was worth more than a government contract.

The $200 Million Contract

In July 2025, Anthropic won a $200 million agreement with the Pentagon to deploy Claude on classified defense networks through Palantir. It was historic. Claude became the first commercial AI model on the Pentagon's classified systems. But Anthropic negotiated something unusual into the contract.

Two red lines. Claude could not be used for mass surveillance of American citizens. And Claude could not be used to power fully autonomous weapons. These were not technical limitations. Claude was technically capable. These were policy decisions, business choices Anthropic made about how its product could be deployed.

At the time, it seemed like a reasonable negotiation. A company setting terms for its own product. Standard stuff.

Then the rules changed.

The Ultimatum

On January 9, 2026, Defence Secretary Pete Hegseth issued a memorandum declaring the Pentagon would become an "AI-first warfighting force." The directive was blunt: all AI contracts must include "any lawful use" language within 180 days. No company could restrict how the military used their models.

This directly contradicted Anthropic's two red lines.

Weeks of negotiations followed. The Pentagon's technology chief, Emil Michael, offered a compromise: written acknowledgements of existing federal laws against autonomous weapons and mass surveillance. Anthropic called the language meaningless: "paired with legalese that would allow those safeguards to be disregarded at will."

Michael told CBS News:

"At some level, you have to trust your military to do the right thing."

Anthropic CEO Dario Amodei did not budge.

"We cannot in good conscience accede to their request"

Think about this from a product perspective. A startup. Its biggest government client. $200 million on the table. And the CEO says no.

The Fallout

On February 27, at 5:01 PM — the deadline — the hammer fell.

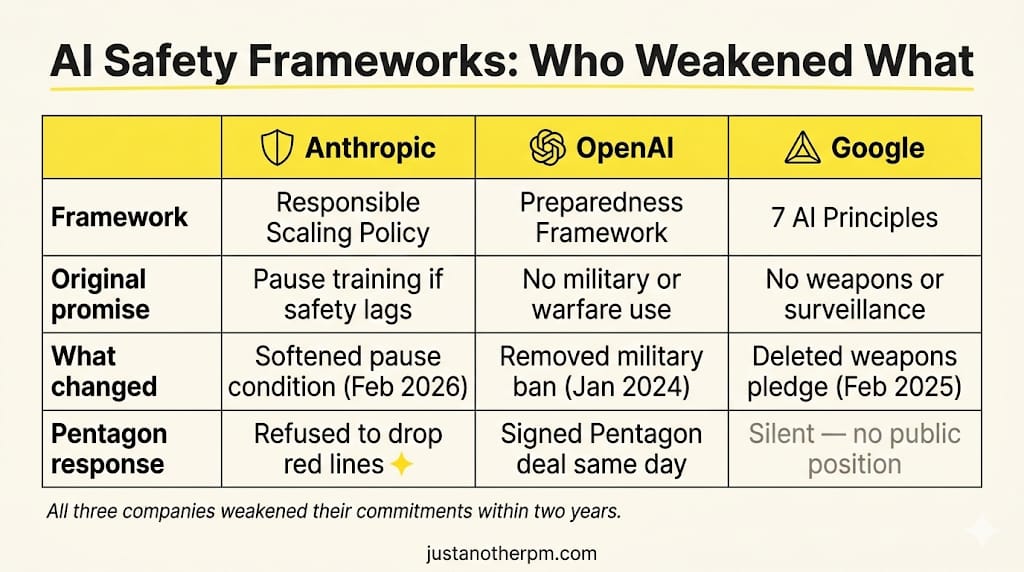

President Trump ordered every federal agency to stop using Anthropic's products. Hegseth declared Anthropic a supply-chain risk to national security. And OpenAI CEO Sam Altman announced a Pentagon deal hours later — claiming OpenAI shared the exact same red lines Anthropic had been punished for.

Dean Ball, one of Trump's own former AI advisers, called the move "attempted corporate murder." Senator Elizabeth Warren accused the administration of extortion.

More than 60 OpenAI employees signed an open letter called "We Will Not Be Divided." Over 200 Google employees asked their leadership to adopt the same red lines.

The entire AI industry was watching. And then something nobody predicted happened.

Liking this post? Get the next one in your inbox!

The Rebound

Within 48 hours, Claude was the number one app on the App Store. Overtaking ChatGPT for the first time ever. Anthropic's free user count had increased by over 60% since January, with daily sign-ups tripling and breaking all-time records every day that week. Social media exploded with people calling to dump ChatGPT.

Two coalitions of workers from Amazon, Google, Microsoft, and OpenAI asked their companies to join Anthropic in refusing the Pentagon's demands.

Anthropic had just turned a government ban into the most effective marketing campaign in AI history. And they did not spend a single dollar on it.

This is the part that matters for product people. Anthropic did not plan this outcome. They were not running a growth hack. They made a product decision — we will not allow our product to be used for these two things — and held that line when every incentive pushed them to fold.

The market rewarded it. Not because consumers suddenly cared about AI policy. But because in a market where every AI product feels interchangeable, Anthropic had done something none of their competitors could: they gave people a reason to choose them that had nothing to do with benchmarks.

Why the Competitors Couldn't Follow

Here is the context that makes this story even more striking.

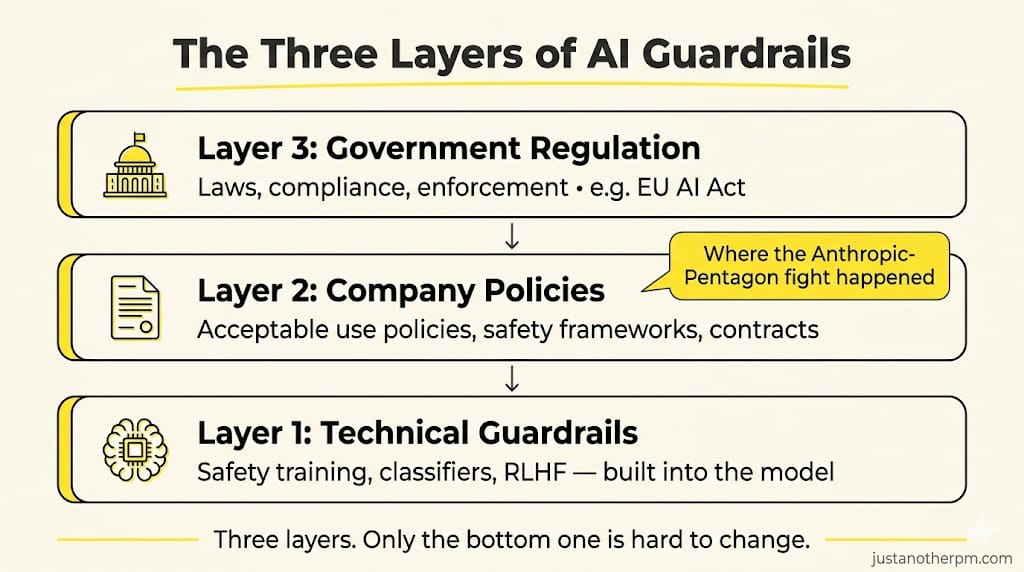

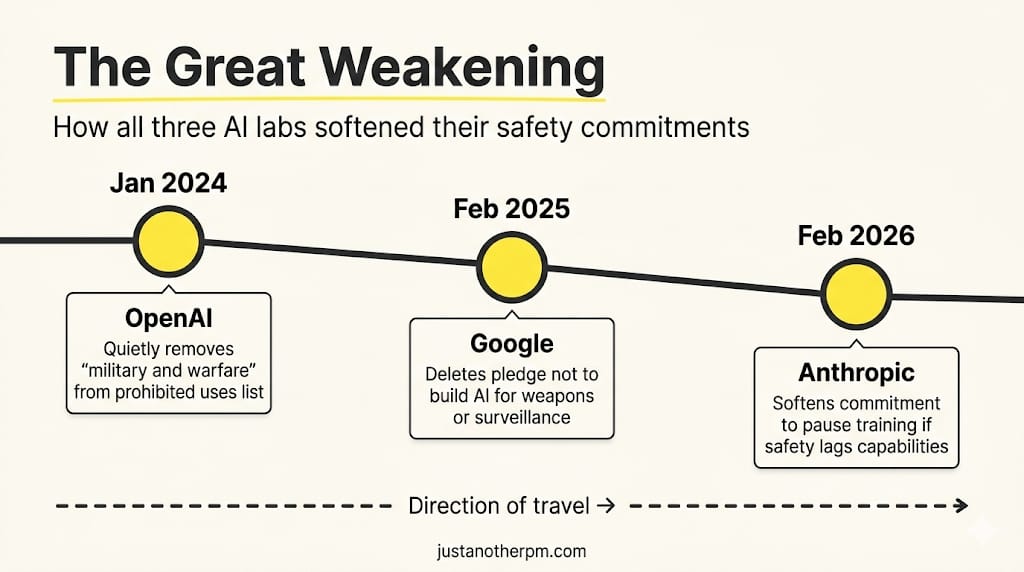

Anthropic was not the first AI company to set safety limits. All three major labs did. And all three weakened them.

In January 2024, OpenAI quietly removed the phrase "military and warfare" from its list of prohibited uses. The blanket ban on military applications disappeared.

In February 2025, Google deleted its pledge not to develop AI for weapons or surveillance — a commitment born from the Project Maven controversy, where thousands of employees protested Google's work with the Pentagon on AI-powered drone surveillance analysis.

Even Anthropic itself released RSP v3.0 three days before the Pentagon deadline, softening its commitment to pause model training if capabilities outpaced safety controls.

But on the specific question — will you let the military use your product for mass surveillance and autonomous weapons — Anthropic drew a line that OpenAI and Google had already erased. That gap is what made the consumer reaction so powerful. People could see, in real time, which company was willing to walk away and which ones were not.

Trust As a Product Feature

The traditional playbook for winning in tech is: build the best product, ship fast, capture market share. Safety and ethics are compliance costs. Things you handle in a policy document, not in the product roadmap.

Anthropic's story challenges that. Their safety commitments were not a cost centre. They were the product.

Anthropic had invested heavily in what they call Constitutional AI — training the model against a set of principles that define what it should and should not do. Their classifiers survived over 3,000 hours of red-teaming by 183 security researchers. Their Responsible Scaling Policy defined capability thresholds that triggered mandatory safety requirements.

None of this was marketing. It was engineering and policy work that most users never saw. But when the Pentagon standoff happened, all of that invisible work became visible. The years of safety investment suddenly had a face — a CEO saying no on national television — and consumers responded.

The lesson is counterintuitive: in a market where products are converging on similar capabilities, the thing that differentiates you might not be a feature. It might be a principle.

The AI Market After February 27

The Anthropic-Pentagon standoff reshaped the competitive landscape.

Before February 27, the AI race looked like a pure capabilities competition. Who has the best model? Who ships the fastest? Who gets the biggest contracts?

After February 27, a second axis appeared: trust. Consumers, developers, and enterprise buyers now had a live example of what it looks like when an AI company is tested on its values — and what it looks like when they pass.

OpenAI still has more users. Google still has more distribution. But Anthropic now owns something neither can easily replicate: a story. A moment where they chose principle over profit, and the market rewarded them for it.

That is not a moat you build with better infrastructure. It is a moat you build with decisions. And it is the hardest kind to copy, because your competitors would have to make the same sacrifice — and they have already shown they will not.

The question every PM should be asking right now is not just "what does our product do?" It is: "what does our product refuse to do — and would we hold that line if it cost us $200 million?"

Most companies never get tested like Anthropic did. But the ones who know their answer before the question arrives are the ones who build brands that last.

See you in the next one,

— Sid

This article draws on publicly reported details from multiple news sources. If you notice any inaccuracies, please let me know.